Today we’ll talk about online video streaming, it’s history, future, key concepts and common high-level solutions.

Video Broadcasting Paradigm Shift

What was the precursor of online video streaming? The closest service in terms of functionality is television of course and it’s still for a little longer.

The barrier for entry in the exclusive club of television networks was very high, requiring specialized equipment, licenses and a connection to a dedicated distribution channel to broadcast the program through the air, cable or satellite.

The internet was bound to create an alternative when a few key conditions were met.

- a/v content could be encoded in digital format

- codecs made it possible to transmit a/v content through the available connections at an acceptable quality

- digital video cameras became affordable

In the early 1990’s all these factors synced to start the online video streaming revolution. The services founded then such as Youtube and Netflix we now take for granted.

To differentiate these from traditional broadcasting services, we will call them over-the-top (OTT for short). Over-the-top defines an a/v transmission that is made over the internet, rather than a dedicated connection. The internet was not designed originally to carry a/v signal so creative solutions for encoding the signal had to be invented to bypass these limitations.

Starting around 2010, the term cord-cutter was coined to describe people choosing to cancel regular tv subscriptions in favor of internet-only connections and services.

More recently, the term cord-never is gainig popularity mostly covering late Millenials and Gen-Z individuals who have never had traditional TV connections.

From Buffering… to Live 4k And Beyond

Then

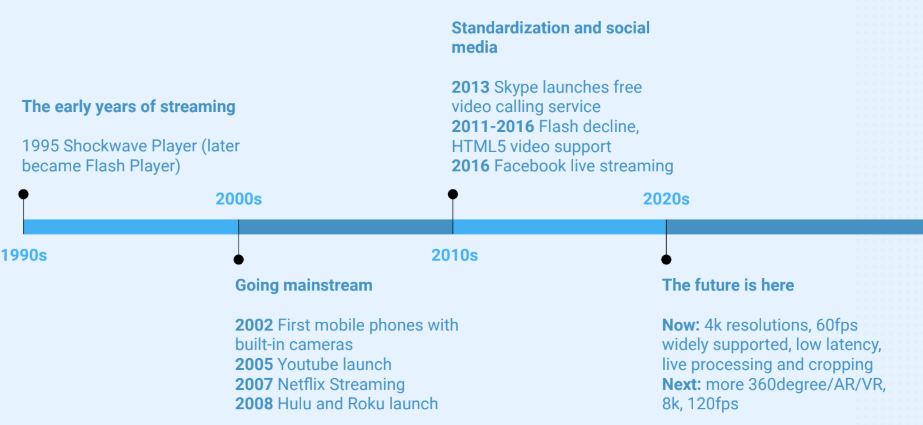

A key event allowing the streaming boom was the introduction of Shockwave Player in 1995 which later became Flash Player.

This technology allowed rich-interface-applications to publish and play back video content through real-time-media-protocol (RTMP) a de-facto standard in the industry.

In 2002 the first mobile phones with integrated video camera became available, paving the way for the democratization of video content online.

The next 6 years find the main online TV players launching their services for on-demand video streaming.

In 2013 Skype releases their reliable video conferencing service for free.

Following a series of security vulnerabilities, performance limitations and Apple’s ban on Flash Player, Adobe dropped support for Flash technologies, leaving a great void in the streaming industry. Many companies struggled to replace legacy Flash-based solutions with more modern JavaScript clients. That was a daunting task given that native browser support for video was not yet properly standardized. The period between 2011-2018 has seen many misguided bets on what solution and protocol would be the new de-facto standard. The short answer is none. There still is a plethora of protocols, codecs and proprietary solutions that need to be pieced together based on specific project limitations. The dream of having one single reliable and standardized way of streaming video remains a long way off.

In 2016, Facebook launched live streaming and thus social media became a crowd-sourced broadcasting network, leading the way to a new wave of video traffic increase.

Currently the technology offers impressive improvements in quality since the first YouTube video was published, allowing 4k videos at 60fps that we can all enjoy Game of Thrones on.

And Now

Live processing of video content now allows for creative uses like augmented reality overlays and background replacement. We can see these use cases in Facebook live where we can play with those animated masks and AR games but in more serious services like Skype for Business and Microsoft Teams. Teams just released a new feature called “Together mode” that crops the heads of participants and pastes them over a predefined image, like a University classroom or a bar. It does all that in real-time offering a seamless and engaging experience for the users.

Recently, there has also been an explosion in video streaming demand. Driven by the Covid-19 lock-down and work-from-home trend, made this industry as profitable as ever, with prospects of impressive growth in years to come.

In the near future we can look forward to more 360 degree, VR/AR streams as well as the adoption of 8k video once the technology becomes widely affordable.

The Four Pillars Of Streaming

If you were to start a video streaming business today, what should you think about?

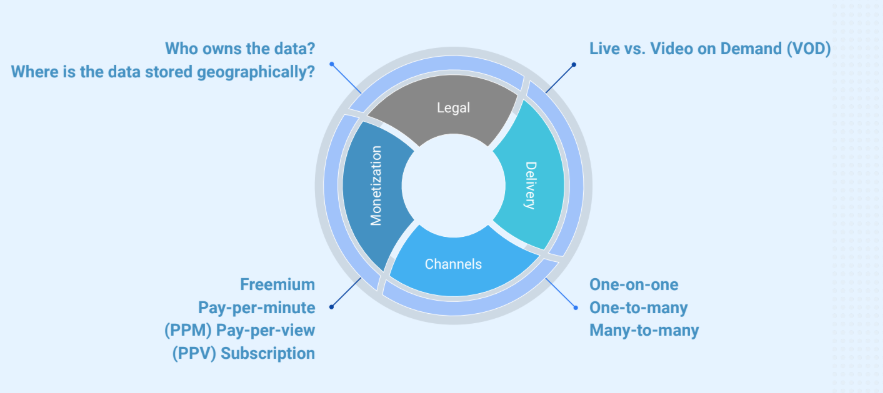

In terms of business, there are just four key concepts to decide on.

The first thing to think about is distribution. Here we have 2 alternatives: live video or recorded content streamed on demand known as video on demand or VOD.

Then, what is the carnality of publish and playback streams? It can be one-to-one, one-to-many and many-to-many.

How will the users be charged and who will pay? Will it be free for users and paid by ads? Will users pay per minute, pay per view or just a monthly subscription?

Furthermore, there is the legal side of the business. The question of who will own the content? And are there any regulations controlling the storage and distribution of the type of content you are handling? For instance if you serve governments, they might have strict requirements that the data not be stored in other countries.

Delivery vs. Monetization

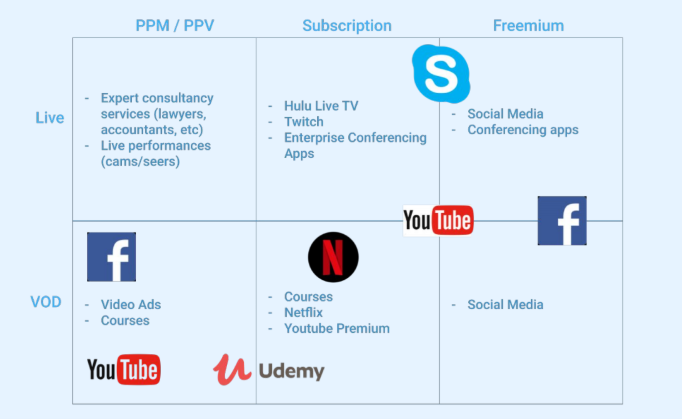

Let’s look at how delivery and monetization is handled by the businesses we know.

We can see that businesses can choose to use multiple models.

Notice how video ad revenue falls under pay per minute video on demand. The viewers enjoy a freemium service while the commercial watch time is paid for by the advertisers.

In the past year, YouTube diversified it’s revenue model, offering premium subscriptions to get rid of the freemium ads.

The 4 Keys For a Seamless Service

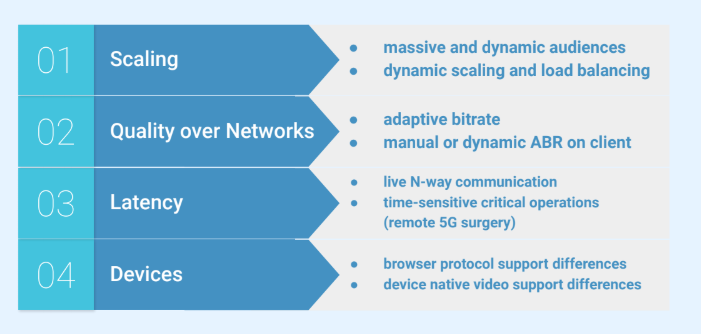

When implementing and integrating a video streaming service, there are some inescapable technical challenges. The first question is how will the system scale under load?

Regular watching patterns on Netflix may be predictable to a degree. But premieres and live sporting events are known to surpass expectations quite often. So, the system can fail to spin up new machines fast enough and many clients will have a less than acceptable experience. In badly designed system, everything can even crash altogether.

Dynamic scaling of the server infrastructure is one issue, handling variable internet speeds on the client side is another. Since usage shifted towards mobile devices, the connection quality varies a lot when travelling between phone towers or between WiFi and mobile connections. To address this, the system must support dynamic bit-rate transitions on the client player side. Otherwise, the user will experience interruptions and service quality degradation.

Latency is a crucial issue when dealing with one-on-one or many-to-many live communication. Delays above 2 seconds will make conversations increasingly annoying. With the introduction of 5g networks, there have even been experiments with remote surgery, a field where latency can literally mean life or death.

Today’s array of connected devices is so diverse in terms of software versions, browsers, screen sizes and performance that a 100% reach is impossible.

The best that can be done is narrowing down on a manageable subset of devices and versions that need to be supported for a wide-enough coverage and serving those well.

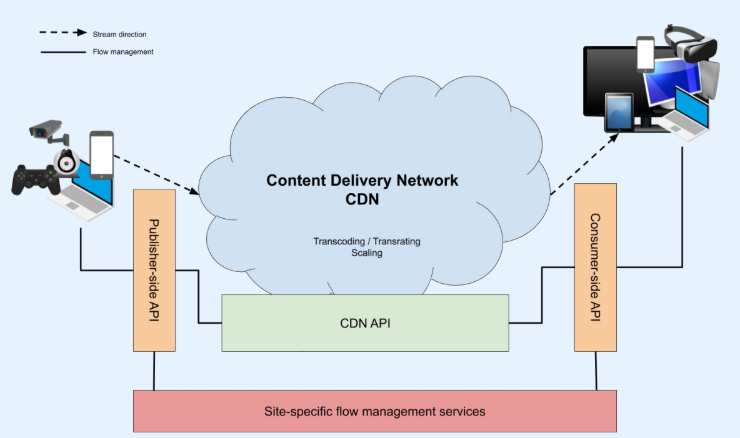

Site Logic Integrates With CDN API

Let’s look at a typical streaming system setup.

On the left we have the publisher side with the users whose devices produce the video signal. On the right side we have the viewers or consumers.

In between there is a content delivery network that solves the technical challenges mentioned earlier. The CDN needs to integrate with the business services so usually the site’s developers create services that control how publishers and consumers interact with the CDN, using the its API. There are Software as a services solutions that provide the CDN on a pay-as-you go model. That can be a good alternative to building and maintaining the infrastructure yourself.

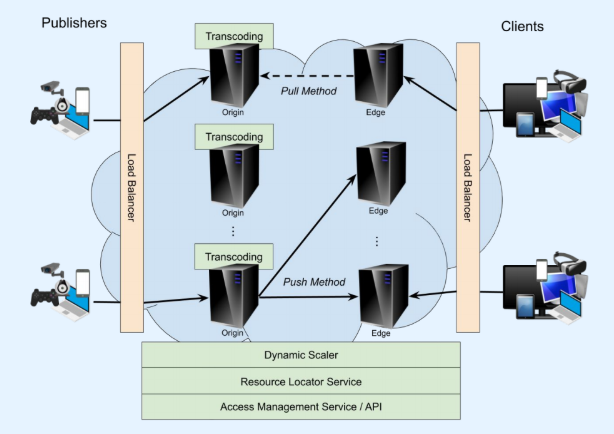

Scalability is the Name of the Game

Now let’s look as what a CDN’s architecture may look like.

Typically, both publish-side and client-side are faced by a load-balancer to redirect the traffic to one of many running servers.

Video stream scalability is normally based on an origin-edge architecture, perfectly suited to one-to-many scenarios. The source will publish the content to an origin server (or multiple when using redundancy in critical scenarios), then edge servers are fed or access the content on demand. The origin can push the stream to all registered edge servers. Or, the edge servers can demand the stream when there is at least one client for it (the pull method). Pulling the stream allows the system to consume fewer resources while in idle. Whereas pushing prevents delays in buffering when the first edge connection comes in. As always there are trade-offs.

The end clients can only connect to edge servers and play the streams.

To orchestrate this, the CDN must provide a way for the client site to locate the resources. It must also dynamically scale based on demand and provide adequate access management and security features.

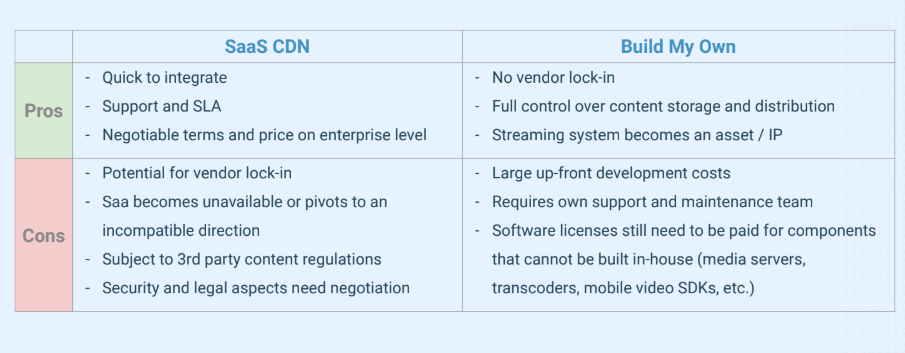

Subscribe or Build Your Own CDN?

Of course the decision to build a delivery network from scratch comes with trade-offs which need to be weighed carefully.

When using a service it’s of course cheaper to integrate and less hassle to maintain. However, you essentially tie your service’s faith to a 3rd party provider. Over time the dependencies can get tightly coupled, making it expensive to switch to a different solution. A classic vendor lock-in situation.

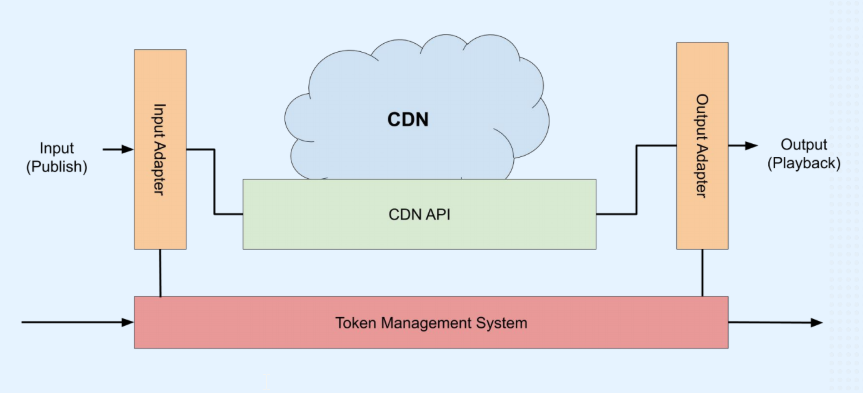

Best Practices

A common approach to protect the business from vendor-lock-in is to build adapters between your site and the SaaS API. Doing this in a generic way should allow your development team to quickly connect to another vendor’s API.

By modelling the interactions as sessions with token-based authorization, the permissions can be controlled in real-time.